Autonomous Vehicle Safety Increased By Use Of Multiple Sensors In An Aggregate Technology

Autonomous or “driverless” cars that combine sensors and software to control, navigate, and drive the vehicle are in various stages of development with numerous companies. Concerns about safety, risk and legal liability have become major issues as challenges occur and testing continues. In order for people to buy self-driving cars and vote for the government to allow them on roads, the technology must be trusted as safe.

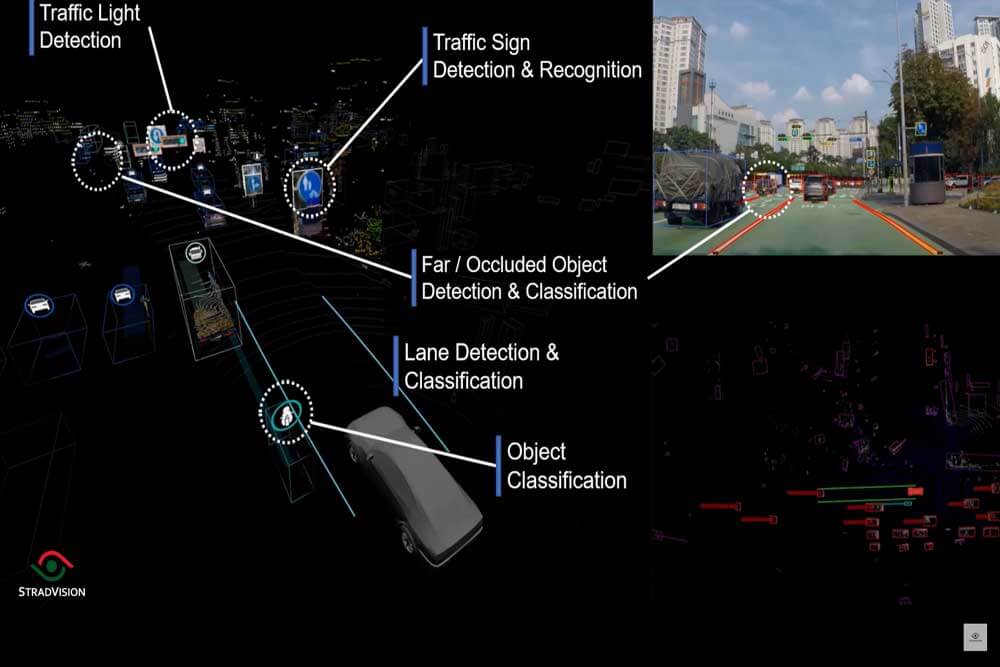

Many digital technologies are being employed, each with their own techniques and accompanying information. Sensor fusion technology is one example of such an approach that combines multiple dedicated sensors to form a single, more precise image of a vehicle’s exterior environment.

Why Sensor Fusion?

Think of it in human terms.

We perceive our surroundings through various sensory organs in our bodies. Although vision is the dominant sensor of daily life, the information acquired with this single sensor is extremely limited. Therefore, we must complete the information of our surroundings by using other sensors — including hearing and smell.

This concept is applicable to AVs. Currently, sensors used in AVs and advanced driver assistance system (ADAS) technology range from cameras to lidars, radars, and sonars. These sensors each have their own advantages, but each also has limited capabilities.

In the automotive field, which is so essential to allowing people to live their daily lives, safety is the most important value. This truth especially applies to AVs, where every mistake could lead to further distrust from the public about AVs. So the ultimate value that most companies aim for through autonomous driving technology is the realization of a safer driving environment.

With sensor fusion technology, each sensor is specialized in acquiring and analyzing specific information. Therefore, advantages and disadvantages are clear in recognizing the surrounding environment, and there are also limitations that are difficult to solve.

Cameras are very effective in classifying vehicles and pedestrians or reading road signs and traffic lights. However, their abilities may be limited by fog, dust, darkness, snow, and rain. Radar and lidar accurately detect the position and velocity of an object, but they lack the ability to classify objects in detail. They also can not recognize various road signs because they are incapable of classifying colors.

Sensor Fusion technology eliminates distortion or lack of data by integrating various types of data acquired. The Sensor Fusion software algorithm complements information about blind spots that a single sensor cannot detect or integrates overlapping data detected by multiple sensors simultaneously and balances information. With this comprehensive information, this technology provides the most accurate and reliable environmental modeling and enables more intelligent driving.

Camera plus lidar

Sensor Fusion is one of the essential technologies to achieve the ultimate value of a safe driving environment pursued by the automotive industry. So then, among the various sensors, why is the industry continuing to pay attention to the combination of camera and lidar?

The answer lies in the completeness of the information that can be obtained when data from the camera and lidar are integrated. Sensor Fusion between the camera and lidar produces intuitive and effective results as if creating 3D graphics on a computer. Lidar grasps the 3D shape of an object and synchronizes it with the precise 2D image information of the camera to accurately implement the surrounding environment as if placing a texture on a 3D object.

Bringing autonomous vehicles to our daily lives

Sensor Fusion is attracting attention as an effective solution for implementing more precise autonomous driving technology, but challenges remain. To bring autonomous driving vehicles into our daily lives, they must have technical reliability that perfectly guarantees human safety and versatility that can be applied to various vehicle types.

As described above, as the number of sensors in the vehicle increases, the accuracy and reliability of the recognition of the surrounding environment increases. On the other hand, as the amount of data to be technically processed increases, expensive hardware with higher computing power is required.

One solution is to make object recognition software lighter and more efficient so that even on embedded platforms, AI-based object recognition is possible. This reduces costs and provides a hardware margin to implement more advanced functions such as Sensor Fusion, which will play an essential role as the industry works to deliver AVs and more advanced ADAS systems to the market.

About StradVision Inc.

Founded in 2014, StradVision is an automotive industry pioneer in AI-based vision processing technology for Advanced Driver Assistance Systems (ADAS). The company is accelerating the advent of fully autonomous vehicles by making ADAS features available at a fraction of the market cost compared with competitors. StradVision’s SVNet is being deployed on 50+ vehicle models in partnership with 9 OEMs and powers 13 million ADAS & Autonomous Vehicles worldwide and is serviced by over 170 employees in Seoul, San Jose, Tokyo, and Munich. The company received the 2020 Autonomous Vehicle Technology ACES Award in Autonomy (Software Category). In addition, StradVision’s software is certified to the ISO 9001:2015 international standard.

Post Comment

You must be logged in to post a comment.